“Always show your working out!” was the mantra of my maths teacher in senior school. This series of blog posts “On the Nature of Lean Portfolios” is an exploration of Lean Portfolios. It is the thought processes running through my mind, exploring the possibilities so that I understand why things are happening rather than just doing those things blindly. It is not intended to be a fait-accompli presentation of the Solutions within Lean Portfolios but an exploration of the Problems to understand whether the Solutions make sense. There are no guarantees that these discussions are correct, but I am hopeful that the journey of exploration itself will prove educational as things are learnt on the way.

Portfolio Use of Weighted Shortest Job First

The standard advice within SAFe is that when it comes to prioritisation is use Weighted Shortest Job First (WSJF). It is a useful simplification to stop Cost-Of-Delay discussions getting unnecessarily complex, but there is more depth to the mechanic than people would assume from reading the SAFe webpage; depth that I’ve previously explored in a blog on The Subtleties of Weighted Shortest Job First.

Weighted Shortest Job First works at the Release Train level because the timeboxes, the Program Increments, exist. The challenge is that at the Portfolio level, where Epics can last for long periods of time, then I suspect that the “Shortest Job First” part of “Weighted Shortest Job First” is going to cause some problems. By its very nature going it’s to make it difficult to balance Short Term wins against Long Term investment, with the long term investment losing. Part of the reason for writing this blog series On The Nature Of Portfolios was to explore this very issue.

What follows over the next few postings are a series of “experiments” to explore the topic and look at the issues that arise. We’ll look at:

- WSJF using Total Epic Effort

- WSJF using Predicted Duration

- WSJF using Experimental Effort

- WSJF; what would Don do?

- WSJF considering Risk factors

- WSJF within the Investment Horizons

Test Scenario

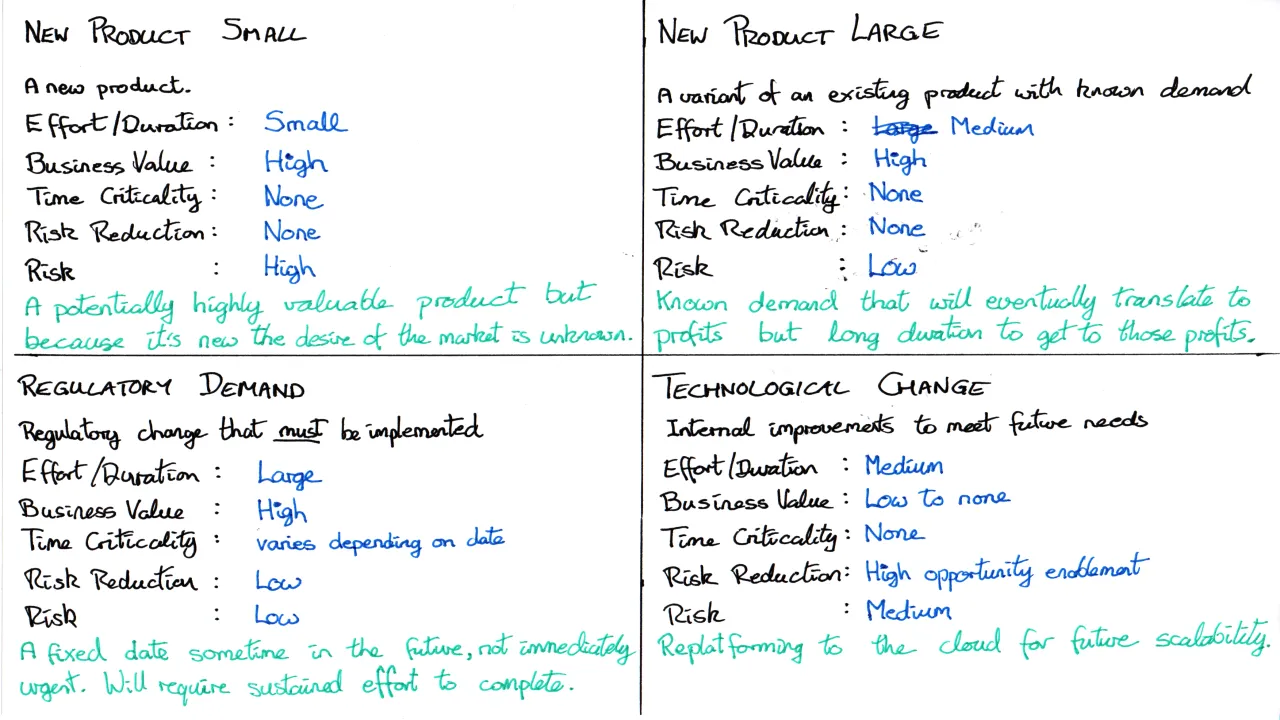

For this investigation we will use a set of Epics against different variations of the Weighted Shortest Job First algorithm to see how the scenarios develop. I think I’ve made the Test data as sensible as possible, but only by using the test set against different scenarios will it be possible to understand if it really is a sensible test set. I should also state that I do not know what the right order is to approach these topics and this challenge exists out in the real world as well; it’s hard to validate whether this was the right approach to the work because it is hard to test the alternate scenarios to see if they were better. Organisations do not go back and redo the work again but in a different sequence to see if they get better results; they ignore sunk costs, begrudgingly, and they just have to get on with deciding what to do next.

WSJF using Total Epic Effort

As a first experiment the WSJF calculation will be run using Total Epic Effort for the Effort estimates.

| Epic | BV | TC | RR|OE | CoD | Effort | WSJF |

|---|---|---|---|---|---|---|

| Product S | 13 | 1 | 1 | 15 | 1 | 15 |

| Product L | 8 |

1 | 1 | 10 | 5 | 2 |

| Regulatory |

21 | 1 | 1 | 23 | 8 | ~3 |

| Enabler | 1 | 1 | 5 | 7 | 5 |

~1.3 |

Table 1: WSJF for PI 1

The numbers going into a WSJF can be very subjective, so some notes on the thinking that led to the above:

- At this point in time nothing is urgent, the deadline for the regulatory work is a long way away. Therefore, Time Criticality (TC) is irrelevant so given 1 for each of the Epics.

- Only the Enabler provides Risk Reduction. Product S is risky from a business perspective, the public might not want it, but the act of doing it doesn’t actually Reduce Risk, therefore it scores a 1 for RR|OE.

- The Regulatory work has the highest BV because the cost of not being able to trade far outweighs any profits from new product.

- Effort involved should be fairly self-explanatory. The assumption for this experiment is that effort & duration are equivalent.

Observation: WSJF Assumes value is always returned

WJSF only considers the potential value in return and assumes that that value will be returned when the work is done. Is this the right? Product S has high potential value so WSJF prioritises it but there is a risk that that promised value might not materialise. Other mechanics such as the Lean Start-up technique need to be used to turn a risky endeavour into a series of Risk Reduction Experiments, in this case experiments to understand whether the public want the output of the Epic could be run before committing expensive engineering effort to the Epic.

Observation: Effort is always “Effort to Complete”

In any Agile environment the effort estimates should always be an estimate of the effort required to complete the remaining work. This can lead to some interesting challenges because through doing the work you might realise that more work is involved than you at first thought. Time has passed in our experimental scenario and PI #2 arrives. Product S has had some technical challenges. Its consumed effort in PI #1 and the promised value is still there but the remaining effort, despite work already being done, has increased to 2.

| Epic | BV | TC | RR|OE | CoD | Effort | WSJF |

|---|---|---|---|---|---|---|

| Product S | 13 | 1 | 1 | 15 | 2 | 7.5 |

| Product L | 8 |

1 | 1 | 10 | 5 | 2 |

| Regulatory |

21 | 1 | 1 | 23 | 8 | ~3 |

| Enabler | 1 | 1 | 5 | 7 | 5 |

~1.3 |

Table 2: WSJF for PI 2

The promise of a high BV or RR|OE in the future can distort the WSJF if the effort estimate is always small.

Ignore Sunk Costs, Do Not Ignore Insight Gained!

The implication arising from this is; for every Program Increment there needs to be some form of insight gained in order to influence the Epic assessment processes within the Portfolio.

Either:

- Lean-Startup experiments to provide Leading Indicators about future success, typically by reducing Risk factors.

- Business Outcomes showing progress towards actual success.

Another observation is that WSJF never stops anything; it deprioritises things, but it doesn’t stop them. Another mechanic running alongside (or around) WSJF needs to look at the metrics, the Business Outcomes and Leading Indicators, of the Epics and assess whether Epics should be allowed to continue.

Observation: Time Criticality – Duration ≠ Effort

Large effort items might not get prioritised until too late without the application of some careful reasoning around the timings.

Equally, large effort does not imply large duration because Value Streams/ARTs could swarm around the Epic and complete it in parallel.

In the example that we’re experimenting with, the Regulatory Demand requires every Train in the Portfolio to contribute to the Epic and ensure that the solutions they own are compliant. Each train can work independently of each other so they can all work in parallel; in a swarming scenario Duration can be significantly shorter than effort.

Work being done in parallel, Bad!

Teams swarming in parallel on work, Good!

Prioritising for Time Criticality can’t be assessed without an understanding of timelines and roadmaps. Roadmaps can’t be constructed without an understanding of train capacity and the ability to swarm on the work. Roadmaps can’t be constructed without a priority to inform the sequencing.

A loop.

Prioritisation is informed by roadmaps, Roadmaps are informed by prioritisation. Any process will need to be iterative and done repeatedly. The timeboxes in the agile world help with the repetition, roadmaps will need to be rebuilt using the latest information every Program Increment.

Next Steps

In the next post we’ll run an experiment to look at what happens to Weighted Shortest Job First if instead of Total Epic Effort we utilise Predicted Duration which can incorporate team swarming.